关于我们

欧亿体育(中国)股份有限公司位于柳州市柳邕路218号是集预应力智能张拉设备、预应力张拉千斤顶、预应力锚具、机具、金属欧亿体育(中国)股份有限公司、塑料欧亿体育(中国)股份有限公司及配套产品生产销售,预应力工程安装、施工和预应力科研开发为一体的专业企业。

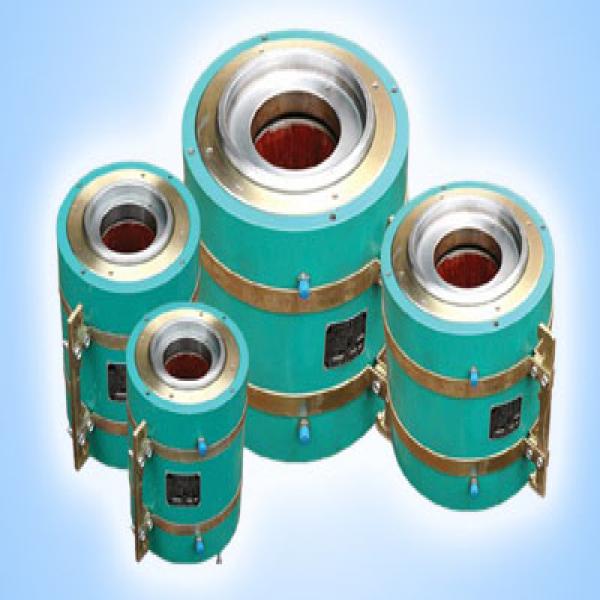

本公司产品在有关专家悉心指导下,经过多年摸索、试验,在结合了OVM、HVM、QM预应力产品技术精华的基础上,成功研发、推出赛姆系列产品体系,均具有安装方便,质量稳定,锚具效率数高、安全可靠等优点。产品质量保证体系按照ISO9001―2000国际标准进行生产管理,产品通过国家各权威检测部门检...

赛姆预应力

- 赛姆佳系列产品体系,均具有安装方便,质量稳定,锚具效率数高、安全可靠等优点

- 产品质量保证体系按照ISO9001―2000国际标准进行生产管理,产品通过国家各权威检测部门检测

- 产品广泛应用于各种预应力混凝土结构、钢结构、运用于公路桥梁、铁路桥梁、高层建筑、水电工程、矿山…

- 现业务遍布全国各省、直辖市、自治区、广泛建立良好的信誉,创造了辉煌的工程业绩

- 为建树恒古千年的事业奉献光热,为铸造中国先进预应力的产业丰碑而永远向前

新闻资讯

欧亿体育(中国)股份有限公司

联系人:路先生

联系电话:18677239860

Email:629399016@qq.com

网址:www.yiotabytes.com

地址:广西柳州市柳邕路218号